I deploy everything from the terminal. No web interface, no CI service, no dashboard with green buttons. Just deploy production from my laptop, and the code goes live.

The setup is two pieces. A bash script that handles the remote work – SSH in, pull the latest code, run hooks. And a Node.js process that watches a file on the server and reloads the app cluster when the file changes. Between them, they do zero-downtime deploys in under ten seconds.

The deploy script

The script is adapted from TJ Holowaychuk’s deploy. I’ve been carrying a forked copy around and adding config sections for each project. A config file tells it where to go:

[vmblog]

user www-data

key keys/cactus

host vnykmshr.com

repo git@bitbucket.org:vnykmshr/vmblog.git

path /var/www/vmblog/releases

ref origin/master

post-deploy ./bin/deploy

One section per project. The deploy command SSHes into the server, pulls the latest code, and runs a post-deploy hook. The hook is where the interesting part happens.

# fetch source

run "cd $path/source && git fetch --all"

# reset HEAD

run "cd $path/source && git reset --hard $ref"

# link current

run "ln -sfn $path/source $path/current"

# deploy log

run "cd $path/source && echo \`git rev-parse --short HEAD\` >> $path/.deploys"

# post-deploy hook

hook post-deploy

It also keeps a .deploys file – one commit hash per line, one line per deploy. Reverting is deploy revert which reads the previous entry and redeploys it. deploy revert 3 goes three deploys back. Simple, stupid, works.

The process monitor

The app server runs through a cluster wrapper. Multiple worker processes behind a master, managed by recluster. The master watches a file – public/system/restart – and when that file changes, it reloads the cluster:

fs.watchFile('./public/system/restart', function(curr, prev) {

util.log('Restart signal received, reloading instances');

cluster.reload();

});

cluster.reload() brings up new workers, waits for them to be ready, then shuts down the old ones. Requests in flight finish on the old workers. New requests go to the new workers. Zero downtime.

The deploy script’s post-deploy hook touches this file. That’s the entire coordination mechanism between the deploy pipeline and the running application – a file modification event. No message queue, no API call, no socket. Just touch public/system/restart.

The heartbeat

The cluster master also runs a heartbeat. Every ten seconds, it sends an HTTP request to localhost:

setInterval(function() {

http.get('http://localhost:' + port, function(res) {

if ([200, 302].indexOf(res.statusCode) == -1) {

reloadCluster('[heartbeat]: FAIL with code ' + res.statusCode);

}

}).on('error', function(err) {

reloadCluster('[heartbeat]: FAIL with ' + err.message);

});

}, 10000);

If the app returns anything other than 200 or 302, the cluster reloads. If the request times out after ten seconds, the cluster reloads. There’s a guard that suppresses multiple reloads for ten seconds after a restart – without it, the heartbeat would keep firing reload while the workers are still coming up. But it’s still aggressive. One failure and it restarts. For a single-server app with a few hundred users, a false restart is cheap and a prolonged outage is not.

How it all fits

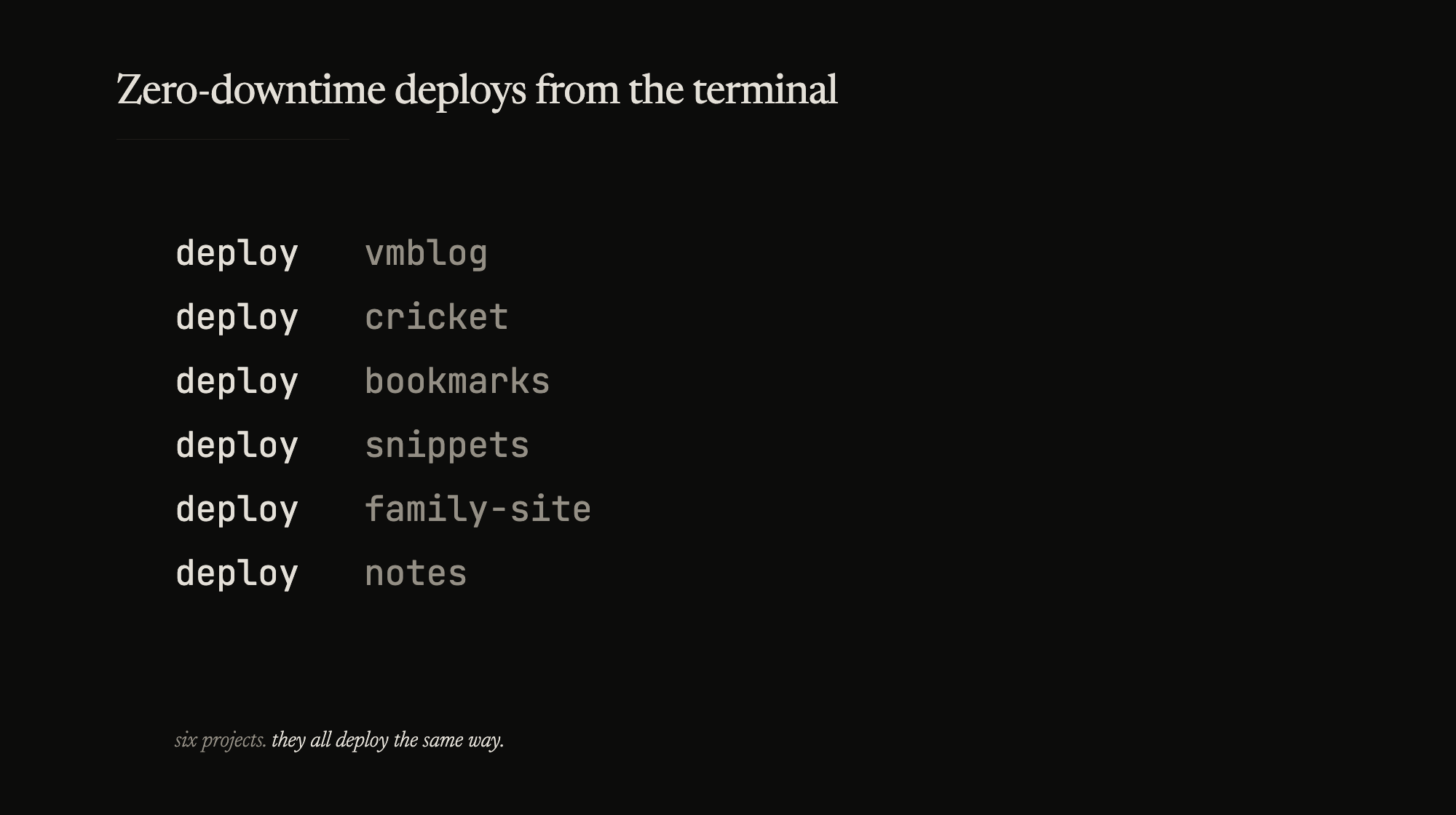

My deploy.conf has six sections right now. The blog. A cricket scores tracker I was messing with. A couple of side projects. A site I set up for family. My entire personal infrastructure in one config file.

I run one or two VPS boxes. All six projects deploy to the same hosts – each has its own repo path, its own current symlink, its own procmon instance. Clean boundaries. Adding a new project is five lines of config and a deploy setup to clone the repo on the server. After that, deploying is always the same command. I don’t have to remember how each project deploys. They all deploy the same way.

No build step, no asset pipeline. The deploy pulls source and restarts. The whole thing is maybe 500 lines of bash and Node.js and I haven’t had to touch the core in months.