I use coding agents on my own private repos every day. Security research, side projects, things I wouldn’t put on a public GitHub. Not something I’d do blindly with work source code though.

So when someone turns off WiFi to prove the agent needs a network connection, I get it. But that’s the architecture. It’s on the pricing page. The agent works on your local files, the reasoning runs on a remote model. Both true, neither a secret.

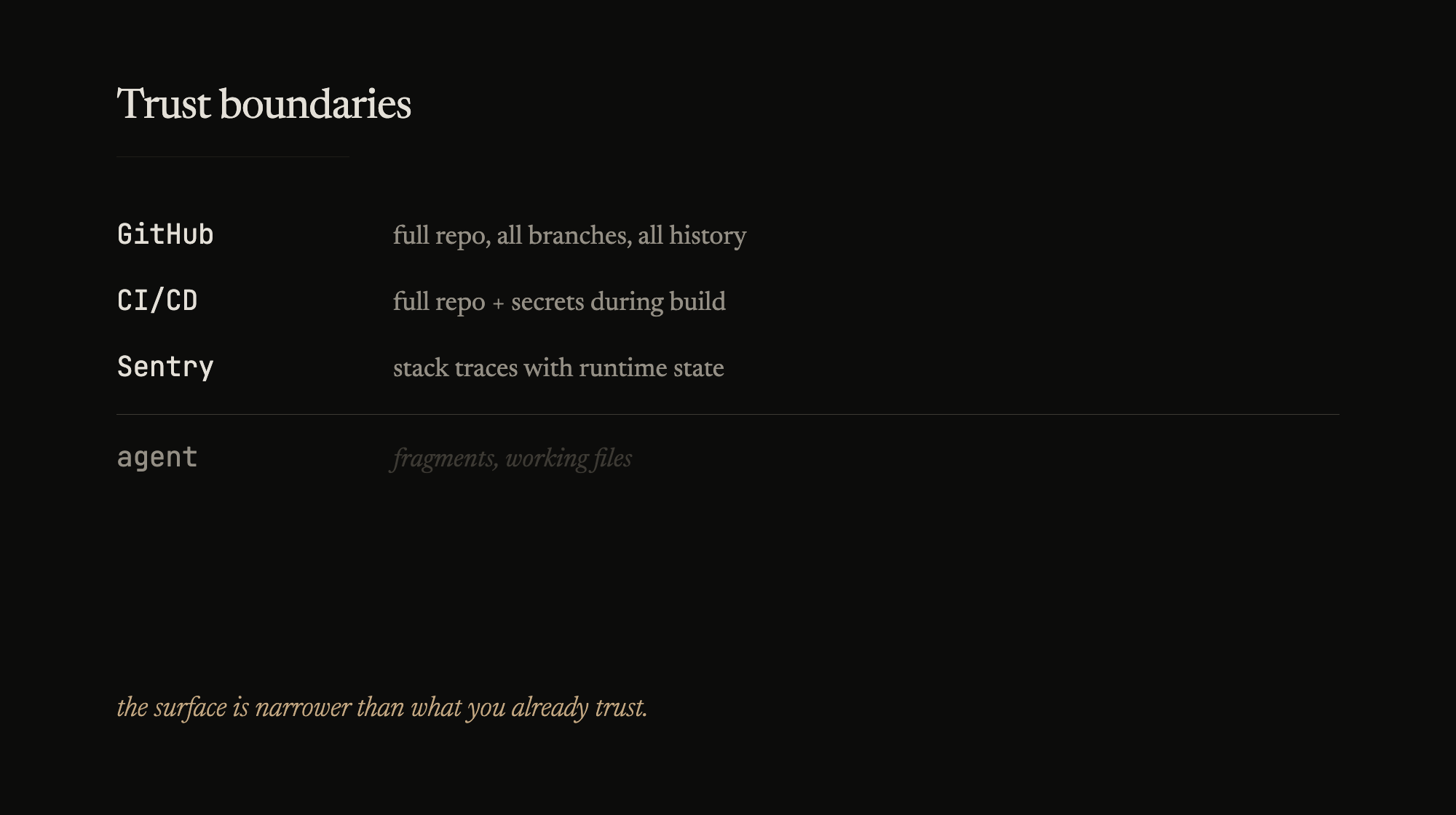

Your code has been leaving your machine for a decade though. GitHub has the full repo, all branches, all history. CI/CD gets the full repo plus secrets during build. Sentry captures stack traces with runtime state.

The coding agent sees less than any of those – fragments, files you’re working on, some search results. Less direct control over what’s included, sure, since the agent picks context based on the task. But the surface is narrower than what you already trust.

The thing people worry about is proprietary logic leaking. That’s not where the risk is. It’s secrets – an .env file in context, an API key in a config, a connection string you forgot about. Same discipline you should already have. Secrets manager, .env never committed, gitignore hygiene. The agent didn’t invent this problem, just gave it another surface.

The broader “someone will steal my code” worry – I’ve built payment systems, distributed backends, things where the code was sensitive. Source code alone is almost never the competitive edge though. The product is what you build around it – the service, the operational knowledge, the failure modes you’ve encoded in runbooks over years of running the thing. If someone got your source code tomorrow, could they ship your product? Not close, most of the time. Open source proves this daily.

People confuse inference and training. When your code hits an API, it’s processed for a response, then falls under the provider’s retention policy. Most API tiers explicitly don’t train on your data. Free tiers are different, read the terms. But “my code was processed” and “my code is now in the model” are very different claims, and the gap between them is where most of the anxiety lives.

Then there’s accumulation. Any single session is fragments. Over a year of daily use, those fragments add up in provider logs. Not an active threat, but the exposure grows. And if you’re in fintech, healthcare, anything regulated, sending code to a third-party API might be a compliance issue regardless of trust. That’s not paranoia, that’s legal exposure.

Right worry, wrong target. Three questions make it productive. What’s your threat model? What are your provider’s data terms? Are you keeping secrets out of context?

That’s operational hygiene, not anxiety.

The developers who should worry most aren’t reading posts about this. They’re committing credentials, running agents on prod infra, and not reading the ToS for any tool – not just AI.