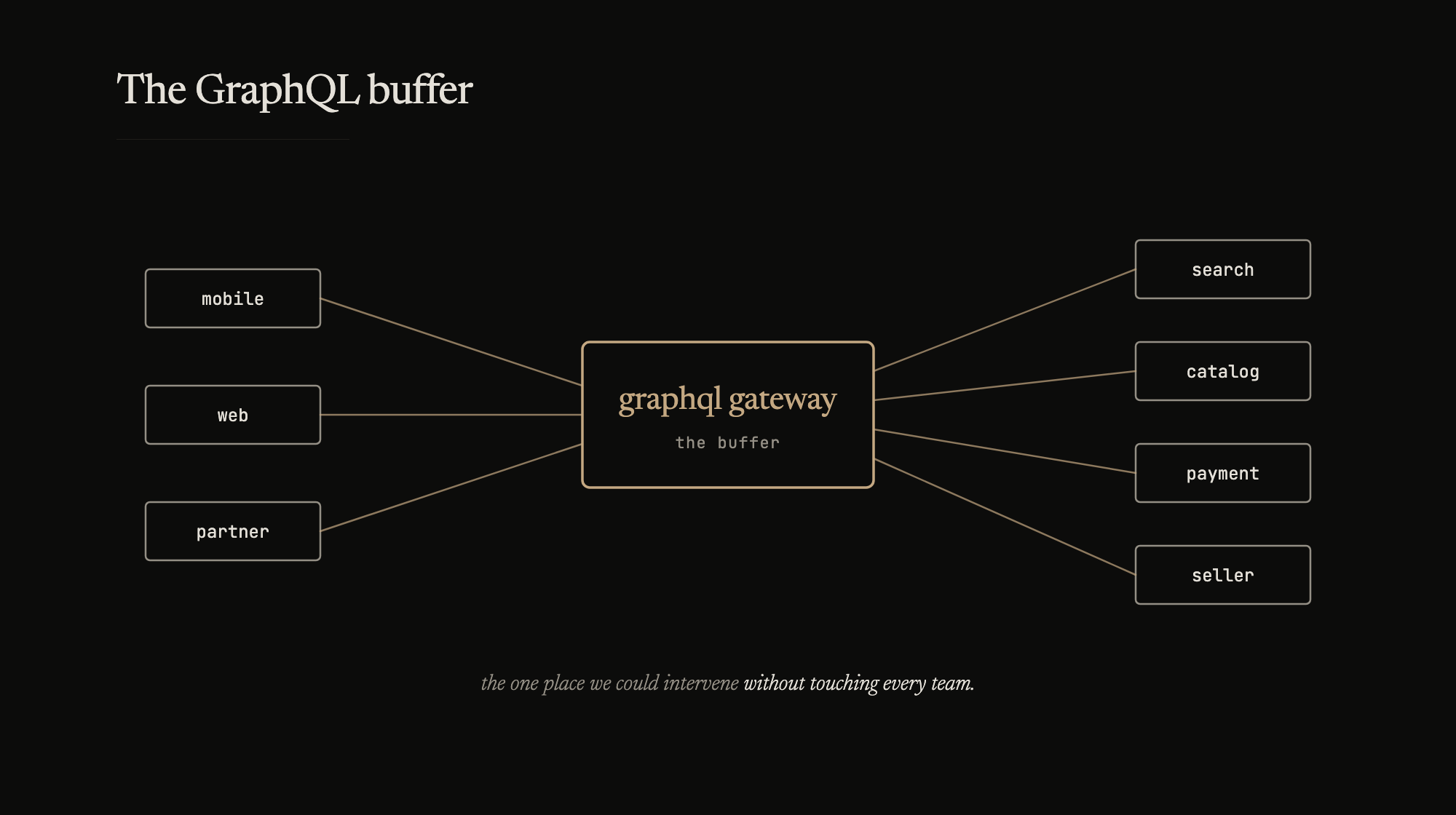

The GraphQL gateway started as a practical problem. We had mobile apps, web clients, and a growing number of backend services. Every client talked to every backend directly. When a new backend came up or an old one changed its API, every client needed updating. The gateway was supposed to fix that – one schema, one endpoint, clients talk to GraphQL, GraphQL talks to backends.

We built it in Go, starting from a fork of graphql-go. The fork grew over time – custom resolvers, caching layers, request batching, things we needed that the upstream didn’t have. We’d sync the fork every few months, but our changes kept growing. Five of us on the team, and most of the early days went into getting other teams to migrate their APIs onto the gateway. We built the base, got teams to add and own their own modules, then moved into a gatekeeping role – reviewing what went in, making sure the schema stayed coherent.

That was the plan. A unified interface for clients. What it became was something more useful.

The buffer

During our big annual sale event, traffic spiked beyond what the backends had headroom for. They started degrading – slow responses, rising error rates, some services timing out. The usual drill: check dashboards, find the bottleneck, fix the service, deploy.

Except we couldn’t deploy fast enough. The backends were spread across multiple teams, multiple codebases, multiple deploy pipelines. Fixing a backend meant finding the team, diagnosing the issue, writing the fix, reviewing it, deploying it. That’s an hour on a good day. During a live sale event, we didn’t have an hour.

The GraphQL layer gave us a different option. We could patch it in real time.

Cached responses for non-essential fields – product recommendations, recently viewed, personalized suggestions. These were expensive backend calls that enriched the page but weren’t required for the core flow. We turned them off at the resolver level and returned cached data instead. The page rendered slightly staler content. The backends stopped drowning.

Changed aggregation logic on the fly – some resolvers combined data from multiple backends. When one backend was slow, the aggregation waited for it, holding up the entire response. We patched the resolver to skip the slow backend and return partial data. The client got most of what it needed in a fraction of the time.

Fixed logical issues in the gateway itself – during the event, we discovered bugs that only appeared under load. There was no time to fix the source service. We patched the fix into the GraphQL layer first. The backend gets the proper fix after the event.

All of this happened because the GraphQL layer sat between clients and backends. It was the one place where we could intervene without coordinating with every team, without deploying to every service. One codebase, one deploy pipeline, five people who understood it.

The mission dashboard

After that first event, we built a dashboard on top of the gateway’s metrics. Not a general-purpose monitoring tool – a purpose-built board organized around the buyer and seller journey.

Big boxes. Green means smooth. Yellow means error rates are climbing. Red means something is broken. You could tell what was choking at a glance – is it search, is it checkout, is it the payment callback, is it the seller dashboard. No clicking into graphs or filtering by service. The journey stages were the categories, laid out chronologically.

Our CTO had it installed on a screen overhead. What started as an experiment for the team became the dashboard that leadership watched during events. It works because it maps to how the business thinks – not “service X has high latency” but “buyers can’t complete checkout.”