The shop needed catalog search. Users type something, products come back. Sounds trivial until you start building it.

Our stack is Node.js, MySQL for the primary data store, MongoDB for everything else we need to go fast. The catalog lives in MySQL – products, categories, attributes, prices. Normalized, relational, correct. But relational isn’t searchable. Try finding “blue cotton kurta” across five joined tables with MySQL FULLTEXT on MyISAM. It sort of works. The relevance is terrible.

What’s out there

MySQL FULLTEXT indexes exist, but only on MyISAM tables. We’re on InnoDB for everything transactional. Running a parallel MyISAM table just for search felt wrong. The relevance ranking is basic – term frequency, nothing more. No field weighting, no boosting.

MongoDB doesn’t have text search. You can regex on a field, you can query by exact values, but there’s nothing resembling full-text search built in.

I’ve been reading about Elasticsearch. Built on Lucene, looks powerful, but it’s version 0.something. Putting the production catalog behind something that young isn’t a bet I want to make right now.

Solr is the established answer. Java, XML configuration, heavyweight. We’re a small team on a Node.js stack. Standing up Solr felt like bringing a tank to a street fight.

So we build it ourselves.

Flat documents

The insight: flatten a product into a single document with all its searchable text in predictable fields, and MongoDB becomes your search index. Not because MongoDB is a search engine – it isn’t – but because it’s fast at querying documents by fields you’ve indexed.

For every product in MySQL, we write a flat document to MongoDB:

{

productId: 4821,

tokens: ["blue", "cotton", "kurta", "men", "casual"],

title: "blue cotton kurta for men",

category: "men/clothing/kurtas",

attributes: "cotton, blue, casual, M, L, XL",

price: 899,

inStock: true

}

The tokens array is the search surface. We tokenize the title, category path, attribute values, brand – anything a user might type. Lowercase, strip punctuation, split on whitespace.

MongoDB’s $all on the tokens array gives us AND semantics. Search for “blue cotton” and you get documents containing both tokens. We default to AND because product search with OR is useless – nobody searching “blue cotton” wants everything that’s either blue or cotton.

Weighting without a search engine

Token matching gets you to “relevant.” Getting to “most relevant first” needs ordering.

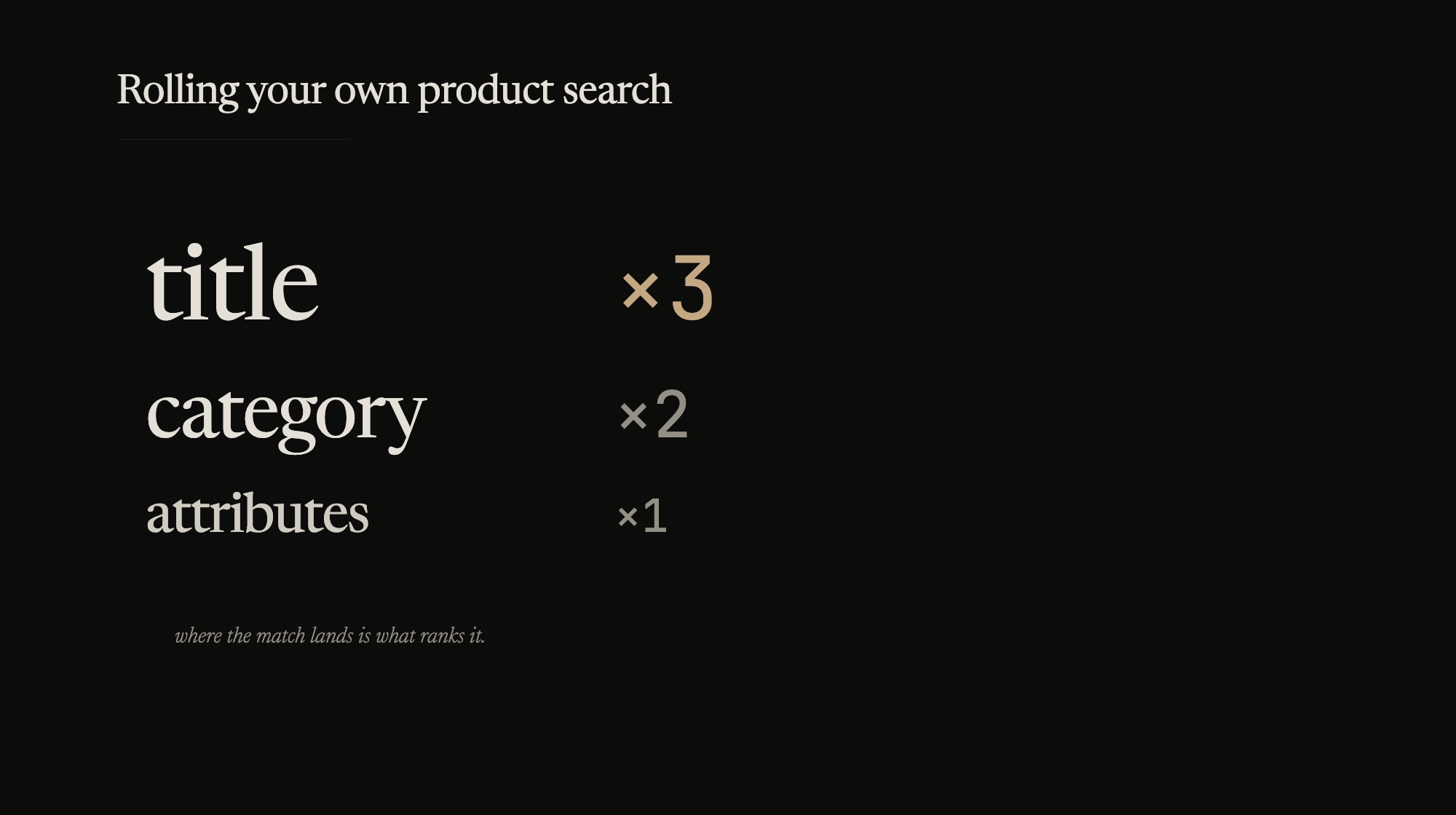

We score by where the match happened:

- Title match: weight 3

- Category match: weight 2

- Attribute match: weight 1

A product called “Blue Cotton Kurta” that matches on title and category outranks one where “blue” only appears in the color attribute. The scoring is crude – multiply weight by matching tokens per field, sum it up. But it works better than returning results in insertion order.

function score(doc, queryTokens) {

var s = 0;

queryTokens.forEach(function(token) {

if (doc.title.indexOf(token) !== -1) s += 3;

if (doc.category.indexOf(token) !== -1) s += 2;

if (doc.attributes.indexOf(token) !== -1) s += 1;

});

return s;

}

Application-side scoring. MongoDB returns the candidate set, Node scores and sorts. At our catalog size – tens of thousands of products – this is fast enough.

Sync is the actual problem

Building the search layer took a few days. Keeping it in sync with MySQL has been the ongoing headache.

Product updated in MySQL? The flat doc in MongoDB needs to reflect it. Price changes, stock status, attribute edits – all need to propagate. We run a sync job that polls MySQL for recent changes and rewrites the corresponding MongoDB documents.

The sync runs on a timer. Products updated in the last N minutes get re-flattened and upserted. Search results can lag by a few minutes. For titles and descriptions, that’s fine. For price and stock, it’s a problem. A customer finding a product through search and seeing a different price on the product page is a bad experience.

Event-driven sync would fix this – product update pushes to a queue, consumer writes the flat doc immediately. Haven’t built it yet. Polling works at our current scale, and the staleness window is tolerable most of the time.

Where this breaks

This works for tens of thousands of products with straightforward text matching. It won’t survive:

Fuzzy matching. User types “kurtha” instead of “kurta” – zero results. We don’t have stemming, edit-distance matching, or phonetic normalization. Implementing any of that in application code means a custom NLP pipeline. Different project.

Faceted search. “Blue kurtas under 1000, size L, cotton only.” The flat doc has the data, but computing facet counts across the result set in application code gets expensive fast. This is where a real search engine earns its keep.

Scale. Application-side scoring works because our candidate sets are small. At a million products, pulling thousands of documents into Node and sorting them won’t fly. You need the query engine to score.

When we hit these walls, we’ll probably look at Elasticsearch again. It should be more mature by then. For now, the custom approach is serving the shop, and we understand every moving part. There’s something to be said for infrastructure you can debug with console.log.