Put Redis in front of a database and reads get fast. The cost is a cache layer that’s now load-bearing, and a set of failure modes that come with that.

Three write patterns, three hard problems. The patterns determine consistency. The problems determine whether your cache layer is a net positive or a source of outages.

Write patterns

Cache-aside (lazy loading). The application checks cache on read. On miss, it reads from the database and populates cache. Writes go directly to the database; cache entries are either invalidated or left to expire.

func GetUser(ctx context.Context, rdb *redis.Client, db *sql.DB, userID string) (*User, error) {

key := "user:" + userID

cached, err := rdb.Get(ctx, key).Result()

if err == nil {

var u User

if err := json.Unmarshal([]byte(cached), &u); err == nil {

return &u, nil

}

// Corrupt cache entry -- fall through to database

rdb.Del(ctx, key)

}

u, err := queryUser(db, userID)

if err != nil {

return nil, err

}

data, _ := json.Marshal(u)

rdb.Set(ctx, key, data, time.Hour)

return u, nil

}

Simple, safe, handles Redis outages gracefully (fall through to database). The cost is cold-start latency and a window where cache and database disagree after a write. For read-heavy workloads where eventual consistency is acceptable, this is the default choice.

Write-through. Every write updates both the database and the cache synchronously. Cache is always current. The cost is write latency – every write takes two round trips. And you cache data that may never be read, wasting memory on writes to cold keys.

func UpdateUser(ctx context.Context, rdb *redis.Client, db *sql.DB, u *User) error {

if err := persistUser(db, u); err != nil {

return err

}

data, _ := json.Marshal(u)

return rdb.Set(ctx, "user:"+u.ID, data, time.Hour).Err()

}

Use this when reads are frequent and consistency matters – session data, user profiles on a high-traffic service, anything where a stale read causes visible bugs.

Write-behind (write-back). Writes go to cache immediately, then flush to the database asynchronously in batches. Write latency drops to a single Redis round trip. Database write load drops because multiple updates to the same key coalesce into one write.

type WriteBuffer struct {

mu sync.Mutex

pending map[string]*User

rdb *redis.Client

db *sql.DB

}

func (wb *WriteBuffer) Update(ctx context.Context, u *User) error {

data, _ := json.Marshal(u)

if err := wb.rdb.Set(ctx, "user:"+u.ID, data, time.Hour).Err(); err != nil {

return err

}

wb.mu.Lock()

wb.pending[u.ID] = u

wb.mu.Unlock()

return nil

}

func (wb *WriteBuffer) Flush(ctx context.Context) error {

wb.mu.Lock()

batch := wb.pending

wb.pending = make(map[string]*User)

wb.mu.Unlock()

for _, u := range batch {

if err := persistUser(wb.db, u); err != nil {

return err // partial flush -- needs retry logic

}

}

return nil

}

The risk is data loss. If Redis dies between a write and the next flush, the data exists nowhere durable. The partial flush problem in the code above is real – production write-behind needs idempotent writes, a dead-letter queue for failed flushes, and monitoring on buffer depth. Use this for high-write counters, analytics, activity feeds – data where losing a few seconds of writes is tolerable.

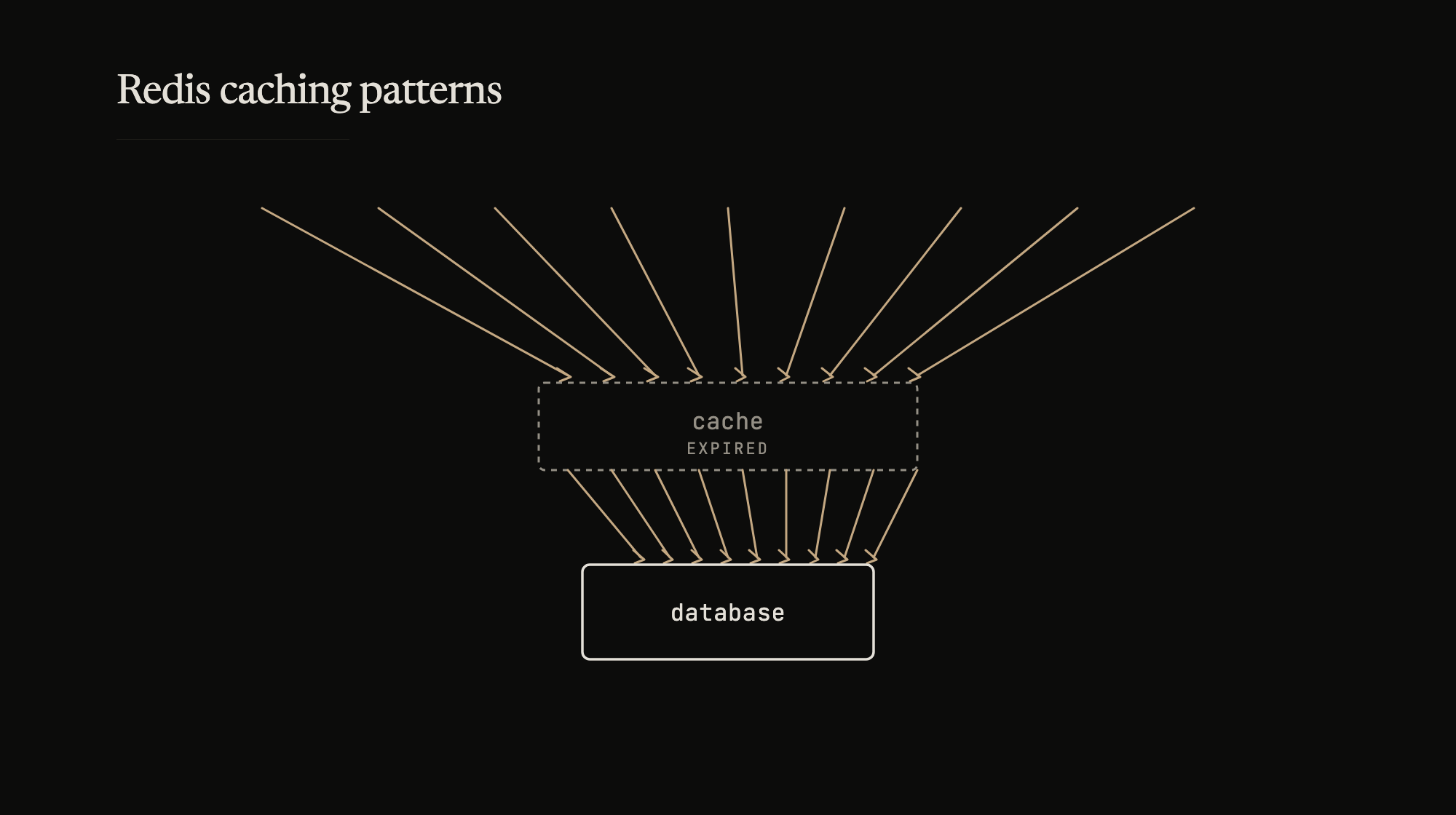

Problem 1: Stampede

A popular cache key expires. Hundreds of requests arrive simultaneously, all see a miss, all query the database. The database spikes, possibly falls over.

The fix is a distributed lock. One request acquires the lock, fetches from the database, populates cache. Everyone else waits briefly and retries against cache.

func GetWithStampedeProtection(ctx context.Context, rdb *redis.Client, db *sql.DB, key string, fetch func() ([]byte, error), ttl time.Duration) ([]byte, error) {

val, err := rdb.Get(ctx, key).Result()

if err == nil {

return []byte(val), nil

}

lockKey := "lock:" + key

lockID := uuid.New().String()

acquired, _ := rdb.SetNX(ctx, lockKey, lockID, 5*time.Second).Result()

if acquired {

defer func() {

// Atomic compare-and-delete to avoid releasing someone else's lock

rdb.Eval(ctx, `if redis.call("get",KEYS[1]) == ARGV[1] then return redis.call("del",KEYS[1]) else return 0 end`, []string{lockKey}, lockID)

}()

data, err := fetch()

if err != nil {

return nil, err

}

rdb.Set(ctx, key, data, ttl)

return data, nil

}

// Someone else is fetching -- wait and retry cache

time.Sleep(100 * time.Millisecond)

val, err = rdb.Get(ctx, key).Result()

if err == nil {

return []byte(val), nil

}

// Fallback to database if cache still empty

return fetch()

}

The lock uses SetNX (set-if-not-exists) with a TTL to prevent deadlocks if the holder crashes. The release uses a Lua script to atomically compare the lock value and delete – without this, a slow holder can release a lock that’s already been acquired by someone else after expiry.

Problem 2: Coordinated expiry

If many keys share the same TTL and were written around the same time, they expire together. Same effect as a stampede, but across many keys simultaneously.

TTL jitter breaks the coordination:

func JitteredTTL(base time.Duration, jitterPct float64) time.Duration {

jitter := float64(base) * jitterPct

offset := (rand.Float64()*2 - 1) * jitter

return base + time.Duration(offset)

}

// base 1h with 10% jitter: TTL ranges from 54m to 66m

rdb.Set(ctx, key, data, JitteredTTL(time.Hour, 0.10))

A 10% jitter on a 1-hour TTL spreads expirations across a 12-minute window. The stampede per key is still possible, but the system-wide wave is broken.

Problem 3: Invalidation

The classic hard problem. Two approaches that work in practice:

Tag-based invalidation. When caching a value, associate it with tags. To invalidate, look up all keys for a tag and delete them.

func SetWithTags(ctx context.Context, rdb *redis.Client, key string, val []byte, ttl time.Duration, tags []string) error {

pipe := rdb.Pipeline()

pipe.Set(ctx, key, val, ttl)

for _, tag := range tags {

pipe.SAdd(ctx, "tag:"+tag, key)

pipe.Expire(ctx, "tag:"+tag, ttl+time.Hour)

}

_, err := pipe.Exec(ctx)

return err

}

func InvalidateTag(ctx context.Context, rdb *redis.Client, tag string) error {

tagKey := "tag:" + tag

keys, _ := rdb.SMembers(ctx, tagKey).Result()

if len(keys) == 0 {

return nil

}

pipe := rdb.Pipeline()

pipe.Del(ctx, keys...)

pipe.Del(ctx, tagKey)

_, err := pipe.Exec(ctx)

return err

}

A user profile update invalidates the user-123 tag, which clears the profile cache, the preferences cache, and any aggregated views that included that user. The tag set is a Redis set, so lookups are fast.

Event-driven invalidation. Domain events (user updated, price changed, order placed) trigger targeted cache deletes. This decouples the write path from cache management – the service writing to the database doesn’t need to know what’s cached. The event handler does.

Both approaches compose. Tags handle the “what to invalidate” question. Events handle the “when to invalidate” question.

Eviction policy

When Redis hits its memory limit, it needs to evict. The policy choice matters.

allkeys-lru – evict the least recently used key across all keys. The right default for most cache workloads.

volatile-lru – same, but only evicts keys with a TTL set. Useful when some keys should never be evicted (reference data, config) and only TTL’d cache entries are expendable.

allkeys-lfu – evict the least frequently used. Better than LRU when access patterns have a stable hot set – popular products, active sessions. The tradeoff: LFU is slow to promote newly popular keys because they haven’t accumulated frequency yet. If access patterns shift often, LRU adapts faster.

noeviction – return errors when full. Use this when you’d rather fail loudly than silently drop cache entries.

Set maxmemory explicitly. Without it, Redis grows until the OS kills it.

When to cache

Not everything benefits. Caching helps when reads outnumber writes, data doesn’t change every request, and a stale answer is better than a slow answer. User profiles, product catalogs, configuration, session data, computed aggregations – these are natural cache candidates.

Real-time balances, inventory counts, anything where staleness causes incorrect behavior – be careful. Write-through with short TTLs can work, but the consistency requirements need to be explicit.

A cache layer that becomes required for correctness (not just performance) is a distributed system with all the coordination problems that implies. Treat cache as an optimization. Design the system to work without it, slowly. Then add cache to make it fast.