Our biggest sale event of the year is on the calendar. The date is fixed, the hour is fixed, and when it starts, traffic hits a multiple of normal within minutes. The engineering challenge isn’t handling surprise. It’s handling certainty at a scale we’ve never seen before.

We prepare for months. Six months out, teams start thinking about what their services need. Backend teams work with SRE and infra to define prescale configurations and autoscale rules. Terraform handles the provisioning. Every service team shares their estimates with infra, and the configurations get codified.

The first event was based on calculations and estimates. It failed miserably.

What we got wrong

Our estimates were off. We had never seen this traffic before, so we guessed. We guessed conservatively because cloud cost was a concern. The backends hit capacity faster than we expected and the degradation cascaded.

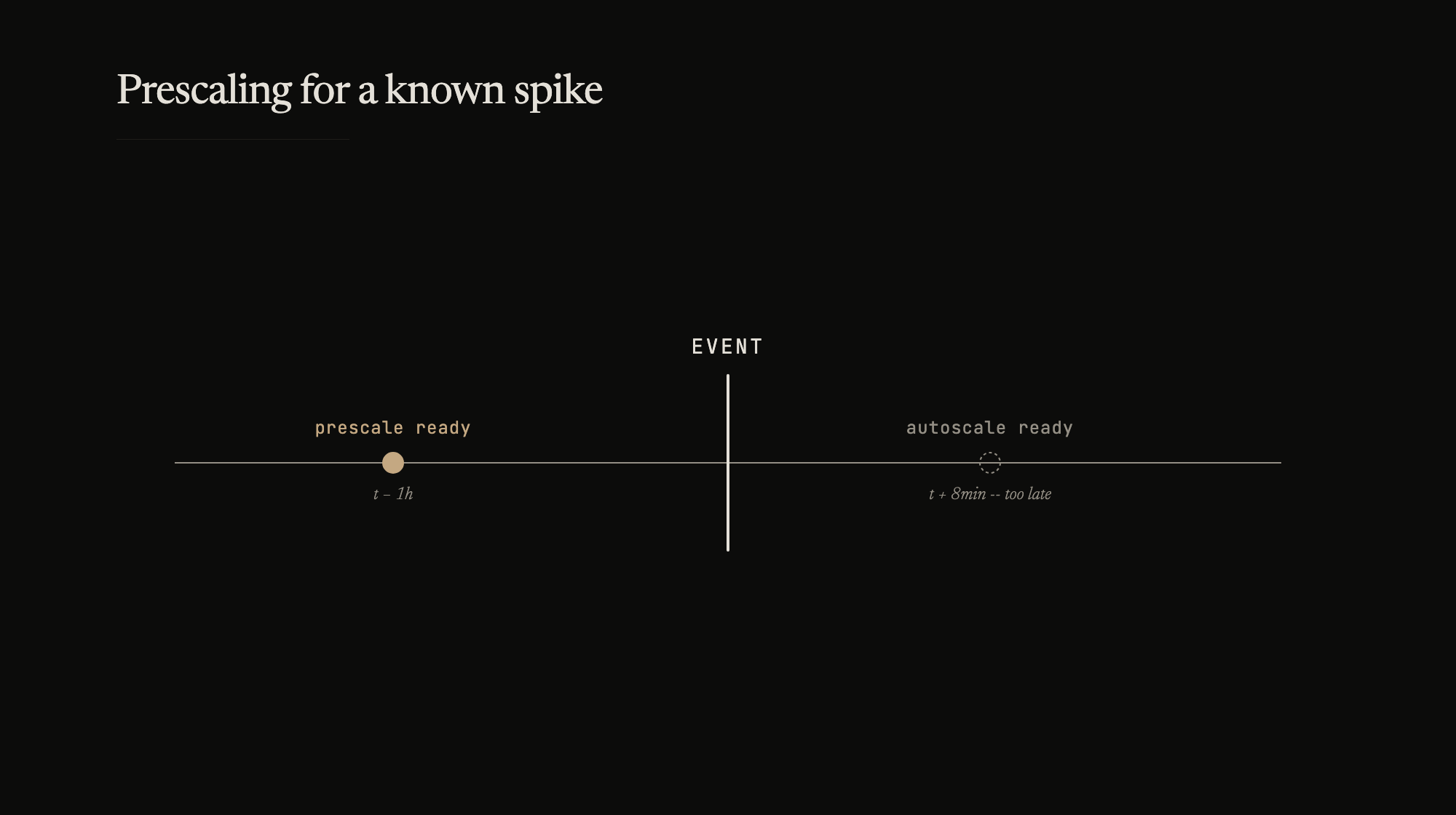

Autoscale rules existed. CPU and memory thresholds would trigger new instances. But autoscale never helped in the time of crisis. By the time a new instance spun up, the application warmed up, registered with the load balancer, passed its health check, and started taking traffic – the worst was already over. The spike had come and gone, the damage was done, and the fresh capacity arrived to serve normal traffic. Autoscale is a safety net for gradual load increases. A sale event isn’t gradual.

What saved us was the GraphQL layer. We patched resolvers in real time – cached responses for non-essential fields, skipped slow backends, returned partial data. The buffer did what it was built to do. But it was a band-aid on a prescaling failure.

What we changed

For the second event the next year, we threw out the estimates and ran load tests. Big ones. Simulated the expected traffic against production-like infrastructure and found the actual breaking points. The numbers from load testing were nothing like our calculations. Services that we thought had headroom broke at half the expected load. Services we’d over-provisioned could have run on fewer instances.

Prescale configurations moved from estimates to measured capacity. Each service had a target based on load test results, not arithmetic. The prescale happened hours before the event – capacity standing ready, warmed up, health checks passing, ready to take traffic the moment the doors opened.

Cloud cost was a line item, not a constraint. Getting through the sale window mattered more than the bill. Over-provisioning by 2x is cheaper than losing transactions during a sale.

Prescale by pattern

After two events, we started prescaling based on traffic patterns – hour of the day, day of the week. Not just for sale events. Regular traffic has predictable shapes. Monday morning is heavier than Sunday night. Lunchtime has a spike. If the pattern is known, the capacity should be ready before the pattern arrives.

Scheduled scaling rules spin up capacity before the traffic comes and scale down after it passes. The service doesn’t react to load – it anticipates it.

We’re preparing for the third event now. The playbook exists. The autoscale rules are still there – nobody’s counting on them for the first five minutes.