PostgreSQL’s streaming replication is straightforward to set up. The documentation is clear, the configuration is well-understood, and base backups with pg_basebackup work reliably.

The operational problems are the hard part. They show up when the primary goes down and the automated failover does the wrong thing. Or when you promote a replica that’s silently been two hours behind. Or when you discover that backups you’ve been taking for months don’t actually restore.

Streaming replication

The foundation. The primary streams WAL (write-ahead log) records to one or more standbys. Configuration on the primary:

wal_level = replica

max_wal_senders = 3

max_replication_slots = 3

wal_keep_size = 1GB

On the standby, create a standby.signal file and set the connection in postgresql.conf:

primary_conninfo = 'host=primary-host port=5432 user=replication'

The standby replays WAL as it arrives. In synchronous mode, the primary waits for standby confirmation before committing – zero data loss, higher write latency. In asynchronous mode, the primary doesn’t wait – lower latency, but the standby can fall behind.

This is the first real decision. Synchronous replication guarantees RPO = 0 but hurts write throughput and couples primary availability to standby health. Asynchronous replication is faster but means the standby might be seconds or minutes behind. Most production setups use asynchronous replication with monitoring on lag.

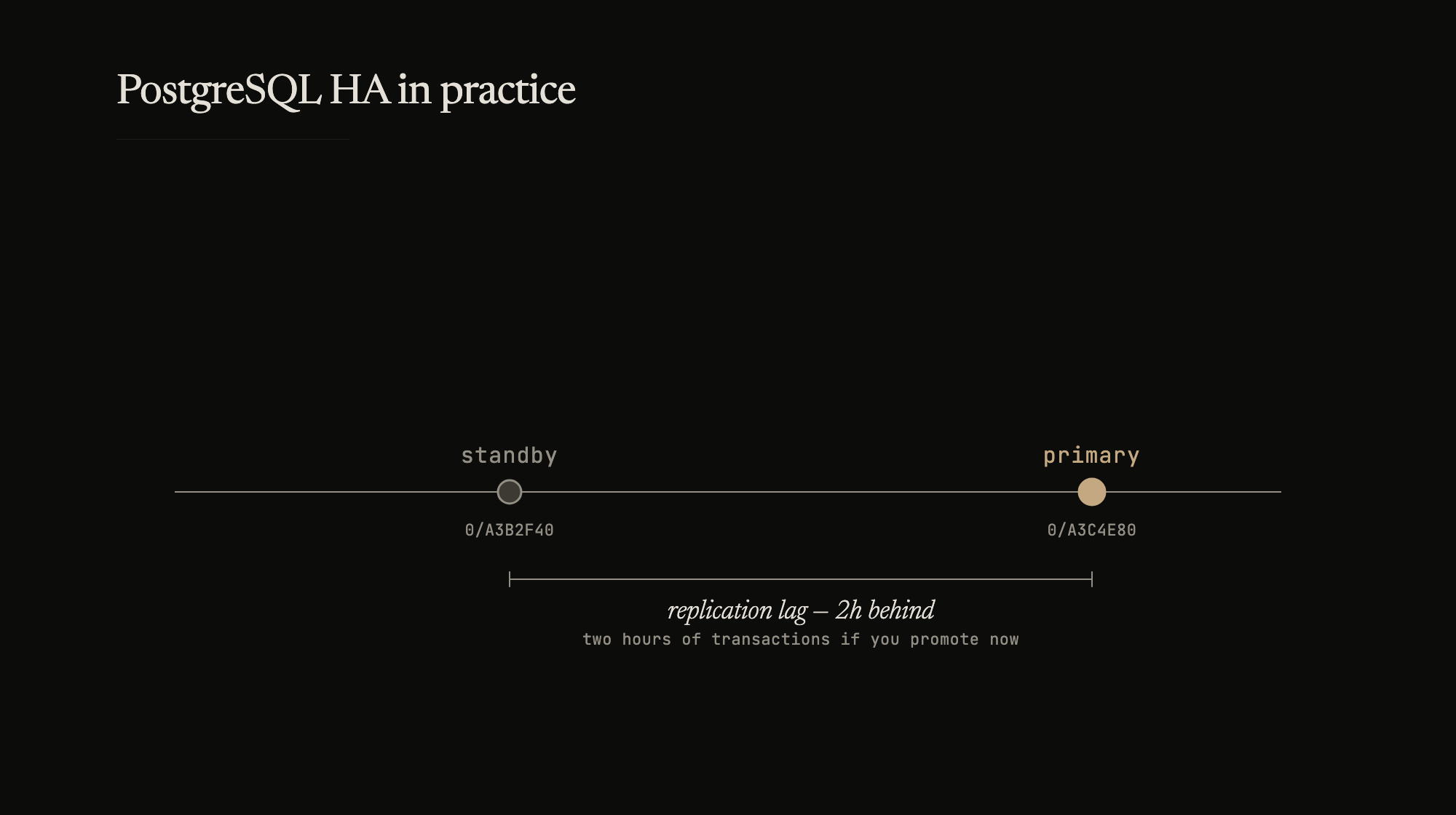

The replication lag trap

Replication lag is the distance between the primary’s current WAL position and what the standby has applied. In normal operation, this is milliseconds. Under load, during long transactions, or when the standby’s disk is slow, it grows silently.

The trap: nobody notices until failover. The standby gets promoted. It’s two hours behind. Two hours of transactions are lost – orders, payments, state changes. The data existed on the primary’s disk but never made it to the standby.

Monitor pg_stat_replication on the primary. The replay_lag column tells you how far behind each standby is. Alert when it exceeds your RPO. If your RPO is 5 minutes and lag hits 10 minutes, that’s an incident – not something to address at the next sprint planning.

Replication slots prevent the primary from discarding WAL that the standby hasn’t consumed. Without slots, a slow standby can fall so far behind that the WAL it needs has been recycled. Then the only recovery path is a fresh base backup. Slots prevent this but introduce their own risk: if a standby goes offline, the slot retains WAL indefinitely, and the primary’s disk fills up. Monitor slot state. Remove slots for permanently decommissioned standbys.

Automated failover

Tools like repmgr or Patroni handle failure detection and standby promotion. The configuration is easy. The operational risk is harder.

Split-brain. The primary is unreachable from the standby’s perspective, but it’s actually running – a network partition. The failover tool promotes the standby. Now two nodes accept writes. Data diverges. Reconciling is manual and painful, sometimes impossible.

Prevention: before promoting, verify the primary is actually down, not just unreachable. Check from multiple network paths. Use a witness node (a lightweight third server that votes on whether failover should proceed). Never promote on a single health check failure.

Promoting a lagged replica. Automated failover should check replication lag before promoting. If the standby is significantly behind, promoting it loses data. The failover tool should have a maximum-lag threshold – if lag exceeds it, refuse to promote automatically and alert for human decision.

Cascading failure. The primary fails. Failover promotes the standby. The application reconnects. But the standby’s connection pool is sized for read traffic, not primary traffic. It gets overwhelmed. Now both the old primary and the new primary are down.

Size standbys to handle primary-level traffic. Test failover under realistic load, not on an idle staging cluster.

WAL archiving and PITR

Streaming replication handles node failure. WAL archiving handles everything else – data corruption, accidental DROP TABLE, bugs that write bad data.

archive_mode = on

archive_command = 'test ! -f /archive/%f && cp %p /archive/%f'

Every WAL segment is copied to an archive (local disk, S3, GCS). Combined with periodic base backups, this enables point-in-time recovery – restore to any moment in the past.

The base backup + WAL archive is your actual safety net. Streaming replication protects against hardware failure. PITR protects against everything else, including problems replication would faithfully reproduce on every standby.

Testing

A backup that doesn’t restore is not a backup. A failover procedure that hasn’t been tested is not a procedure.

Monthly: kill the primary during low-traffic hours. Observe whether failover completes within your RTO. Check data consistency on the new primary. Fix whatever broke.

Quarterly: full environment restoration from base backup + WAL archive to a recovery target. Restore to a specific point in time. Validate application-level data integrity, not just that PostgreSQL starts.

The restore test is the only proof that your backup pipeline works end-to-end. Archive corruption, permission issues, missing WAL segments – these only surface when you actually try to restore.

Runbooks

Failover runbooks need to work under pressure for an on-call engineer who didn’t set up the system. Short commands, clear decision points, no ambiguity:

1. Check cluster state: repmgr cluster show

2. Check standby lag: SELECT * FROM pg_stat_replication;

3. If lag < 5min: repmgr standby promote

4. If lag > 5min: STOP. Assess data loss. Page senior on-call.

5. After promotion: Update connection strings / DNS

6. Verify: Run application health checks

Step 4 is the one that matters. Automated tools skip it. A runbook that forces a human decision at the threshold where data loss becomes significant is more reliable than fully automated failover that promotes blindly.

The operational stance

HA for PostgreSQL is an operational stance, not a project you finish. The streaming replication config is a one-time setup. The ongoing work is monitoring lag, testing failover, validating backups, and updating runbooks as the system evolves.

The systems that survive incidents are the ones where someone asked “what if this fails?” and then actually tested the answer.