Nginx as a reverse proxy and load balancer is well-documented. The configuration syntax is not the hard part. The decisions are.

Algorithm selection

Three algorithms cover most workloads.

Round-robin (the default). Requests cycle through backends sequentially. Weights let you bias toward higher-capacity servers. Simple, predictable, works well when request processing times are uniform.

upstream api {

server api-01:8080 weight=3;

server api-02:8080 weight=2;

server api-03:8080 weight=1 backup;

keepalive 32;

}

The backup directive keeps a server in reserve – it only receives traffic when all non-backup servers are down. Useful for a smaller instance that can keep the service alive during a partial outage but shouldn’t take production load normally.

Least connections. Routes to the backend with the fewest active connections. Better than round-robin when request processing times vary significantly – payment processing, file uploads, database-heavy endpoints. Round-robin can pile requests on a server that’s still processing slow queries while another server sits idle.

upstream payments {

least_conn;

server pay-01:8080;

server pay-02:8080;

server pay-03:8080;

}

IP hash. Hashes the client IP to select a backend, sending the same client to the same server consistently. Necessary for stateful applications where sessions are stored in local memory. Not needed if sessions are externalized to Redis or a database – in that case, round-robin or least-conn is a better choice because IP hash can create uneven distribution when traffic comes from a small number of source IPs (corporate NATs, CDN edges).

upstream stateful {

ip_hash;

server app-01:8080;

server app-02:8080;

}

Passive health checking

Nginx doesn’t actively probe backends (that requires Nginx Plus or a third-party module). Instead, it uses passive health checking: if a backend fails a configurable number of times, Nginx removes it from rotation for a timeout period.

server backend-01:8080 max_fails=3 fail_timeout=30s;

max_fails=3 means three failures within the fail_timeout window (connection refused, timeout, 502/503/504 response) mark the server as down. The failures don’t need to be consecutive – any three within the window trigger removal. fail_timeout=30s controls two things: the window in which failures are counted, and how long the server stays out of rotation after being marked down.

After 30 seconds, Nginx tries the server again. If the next request succeeds, the server re-enters rotation. If it fails, the cycle restarts.

The default max_fails=1 is too aggressive for most production setups – a single timeout marks the server as down. Start with 3 and adjust based on your application’s error characteristics.

Rate limiting

Nginx rate limiting uses a leaky bucket algorithm. Define zones (shared memory segments that track request rates per key), then apply them to locations.

limit_req_zone $binary_remote_addr zone=api:10m rate=10r/s;

limit_req_zone $binary_remote_addr zone=login:10m rate=1r/s;

server {

location /api/ {

limit_req zone=api burst=20 nodelay;

proxy_pass http://api;

}

location /auth/login {

limit_req zone=login burst=5;

proxy_pass http://api;

}

}

The burst parameter allows temporary spikes above the rate. nodelay processes burst requests immediately rather than queuing them – better for API traffic where clients expect prompt responses. Without nodelay, burst requests are delayed to enforce the average rate, which is appropriate for login endpoints where you want to genuinely throttle attempts.

Different endpoints need different limits. An API endpoint serving data reads can handle 10 requests per second per client. A login endpoint should be much tighter to limit credential stuffing.

Upstream timing in logs

The default Nginx log format doesn’t include backend timing. Adding upstream variables makes latency debugging possible:

log_format timed '$remote_addr [$time_local] "$request" $status '

'rt=$request_time uct=$upstream_connect_time '

'uht=$upstream_header_time urt=$upstream_response_time';

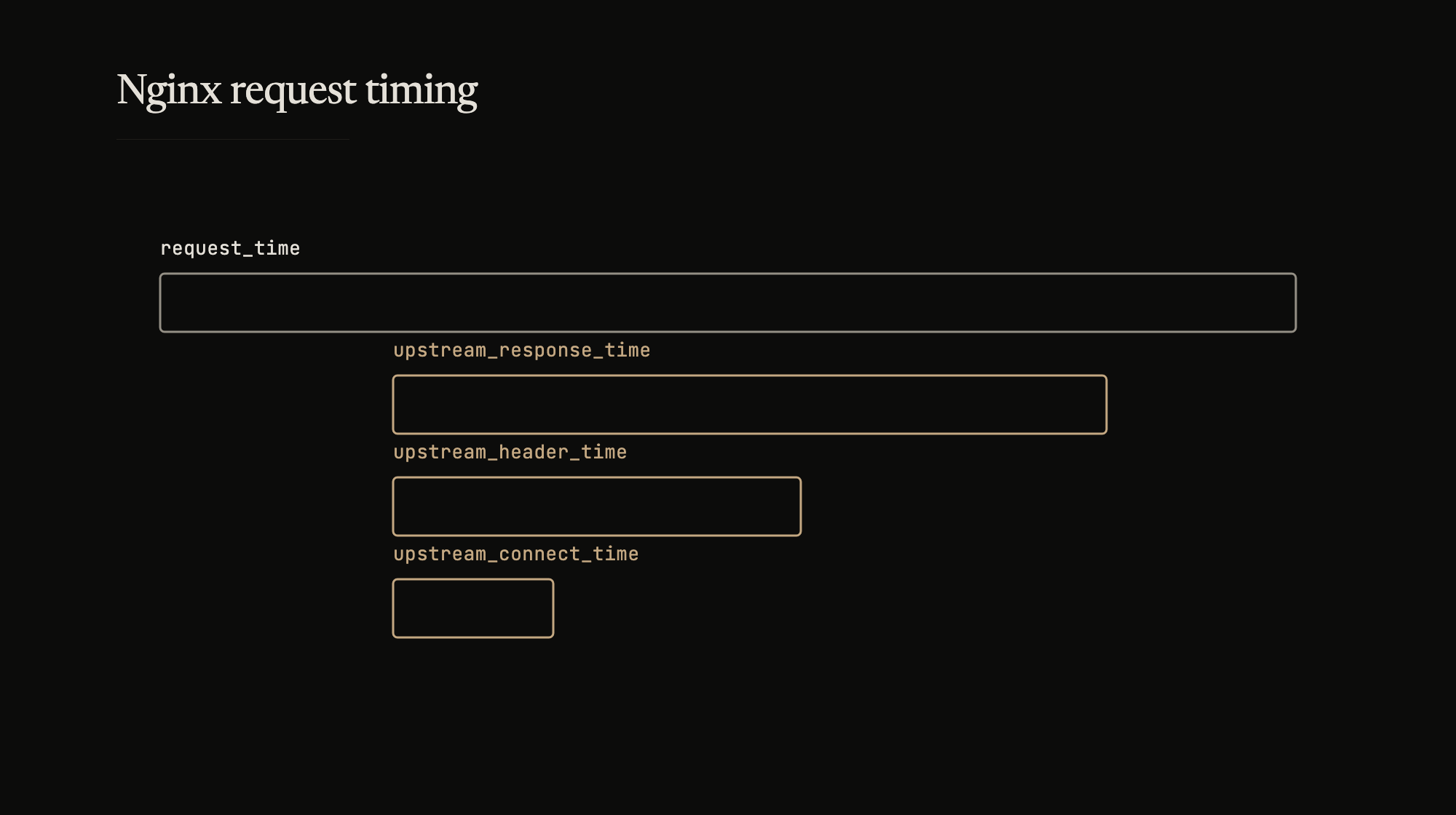

request_time is the total time from when Nginx received the first client byte to when it sent the last byte of the response. upstream_connect_time is how long the TCP handshake to the backend took. upstream_header_time is how long until the backend sent its first response byte. upstream_response_time is the total backend response time.

The gap between upstream_response_time and request_time is Nginx’s own overhead plus client-side network time. When investigating latency, these four numbers tell you whether the bottleneck is the backend, the network, or the proxy itself.

Keepalive connections

By default, Nginx opens a new connection to the backend for every proxied request. For high-throughput services, the TCP handshake overhead adds up.

upstream api {

server api-01:8080;

server api-02:8080;

keepalive 32;

}

location / {

proxy_pass http://api;

proxy_http_version 1.1;

proxy_set_header Connection "";

}

keepalive 32 maintains a pool of up to 32 idle connections per worker process. proxy_http_version 1.1 and clearing the Connection header are required – HTTP/1.0 closes connections by default, and Nginx sets Connection: close unless told otherwise.

The right pool size depends on traffic. Too small and connections churn. Too large and idle connections consume file descriptors on the backend. Monitor upstream_connect_time in your logs – if connect times are consistently elevated within the same datacenter, the pool is likely too small and connections are being re-established on every request.