We’re building a platform with a social feed. Not a timeline of photos or opinions – a feed of plans. Things people intend to do. The interesting question isn’t “what did my friend share” but “what is my friend’s friend doing this Saturday, and can I join.”

That second question – friend-of-friend discovery – is what made me reach for a graph database.

Why graph

At first glance, a feed is just a list. The interesting part is underneath – a web of relationships: who knows whom, who created what, who joined what.

Relational databases model this with join tables. A friendships table with two foreign keys. A participants table. Each relationship is a row. To answer “show me plans from my extended network,” you’re joining across three or four of these tables, and the database is doing hash joins or nested loops to assemble something that, in a graph, is just walking edges.

Neo4j stores relationships as first-class objects. A knows edge between two user nodes is a physical pointer, not a row in a join table. Traversing from a user to their friends to their friends’ plans is pointer-chasing, not table-scanning. The cost scales with the size of the local neighbourhood, not the whole dataset.

I looked at the alternatives. MySQL FULLTEXT wasn’t relevant – this isn’t a search problem. MongoDB could store the documents but the queries would be application-side loops. Elasticsearch was the wrong shape entirely – it’s a search engine, not a traversal engine. For this specific data shape – relationships between people and their actions – Neo4j was the obvious fit.

The shape

The graph has users, plans, and edges between them. Users know each other. Users create plans, join plans, get tagged in plans. That’s most of the schema.

knows is bidirectional – confirming a friend request creates edges in both directions. Everything else is directional. Plans have a what, where, when, and a timestamp. We anchor every node to a root node of its type for indexed lookups – all plans connect to a plan root, all users to a user root. It’s how you do global traversals without scanning the entire graph.

The feed for any user comes from three traversals: plans you created, plans your friends created, and – the interesting one – plans your friends joined that you haven’t seen yet. That third query is where the graph earns its keep. If your friend joined a weekend trek organized by someone you’ve never met, you probably want to know about it. That’s where discovery happens – not in your direct connections, but one hop beyond them.

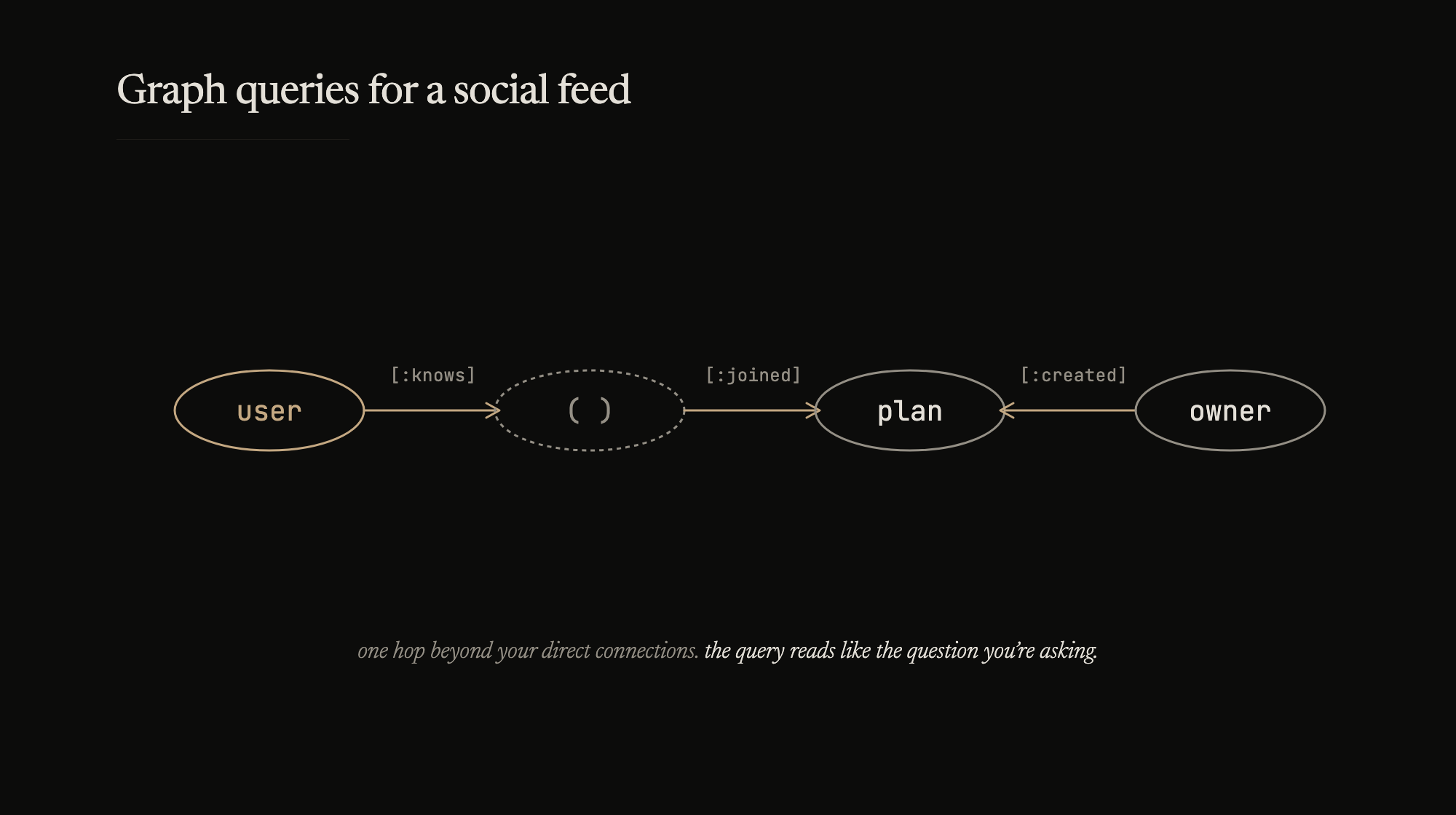

In Cypher, the friend-of-friend discovery looks roughly like this:

START user=node({userId})

MATCH (user) -[:knows]-> () -[:joined]-> (plan) <-[:created]- (owner)

WHERE NOT ((user) -[:created]-> (plan))

AND NOT ((user) -[:joined]-> (plan))

RETURN plan, owner

ORDER BY plan.created_at DESC

The anonymous node – () – is the friend. We don’t care who they are for this query, just the path through them. The WHERE NOT clauses prevent duplicates. Start at a user, walk the graph, find plans. The query reads like the question you’re asking.

Try expressing that as a SQL join. It works, but it’s three times the code and you spend half your time thinking about the join order instead of the actual question.

Practical bits

We’re using the node-neo4j driver, which talks to Neo4j over HTTP. Every Cypher query is a REST call. The overhead adds up when the feed requires multiple queries per page load. We put an LRU cache in front – 500 entries, 10-second TTL. Short enough that we don’t miss a new plan. Long enough that a page refresh doesn’t hammer Neo4j.

var LRU = require('lru-cache');

var cache = LRU({ max: 500, maxAge: 10 * 1000 });

The feed aggregation happens in application code – fire the queries with async.series, merge results by uid, sort by timestamp, deduplicate. We spent a day debugging duplicate plans in the feed before realizing a bidirectional knows edge means the friend-of-friend traversal can reach the same plan through two different paths. The dedup isn’t optional.

It’s not elegant. But it’s correct, and at our current scale, it’s fast.

What’s next

Neo4j 2.2 just shipped with labels and schema indexes. That might eventually replace our root anchor nodes – MATCH (u:User {email: {email}}) instead of indexed node lookups through a root. We started before labels were stable, so the old pattern is baked in. Something to look into when we have the time.

The N+1 problem is real. Fetching the feed is one set of queries. Then for each plan, we fetch attendees and tagged users separately. With a hundred plans on screen, that’s a lot of round trips. The fix is a single Cypher query with COLLECT to aggregate everything in one traversal. Haven’t gotten to it.

The Bolt protocol is supposedly coming to replace the REST API. That would cut the HTTP overhead per query. For now the cache handles it.

None of these are blocking us. The graph model is right for this problem. The queries match the shape of the questions. Everything else is plumbing.