A service calls a dependency. The dependency is slow or down. The service waits, ties up a goroutine, maybe a connection. Multiply that by every request in flight, and the caller is now as broken as the dependency it called.

Circuit breaking stops this. Instead of waiting on something that’s failing, stop calling it. Let it recover. Try again later.

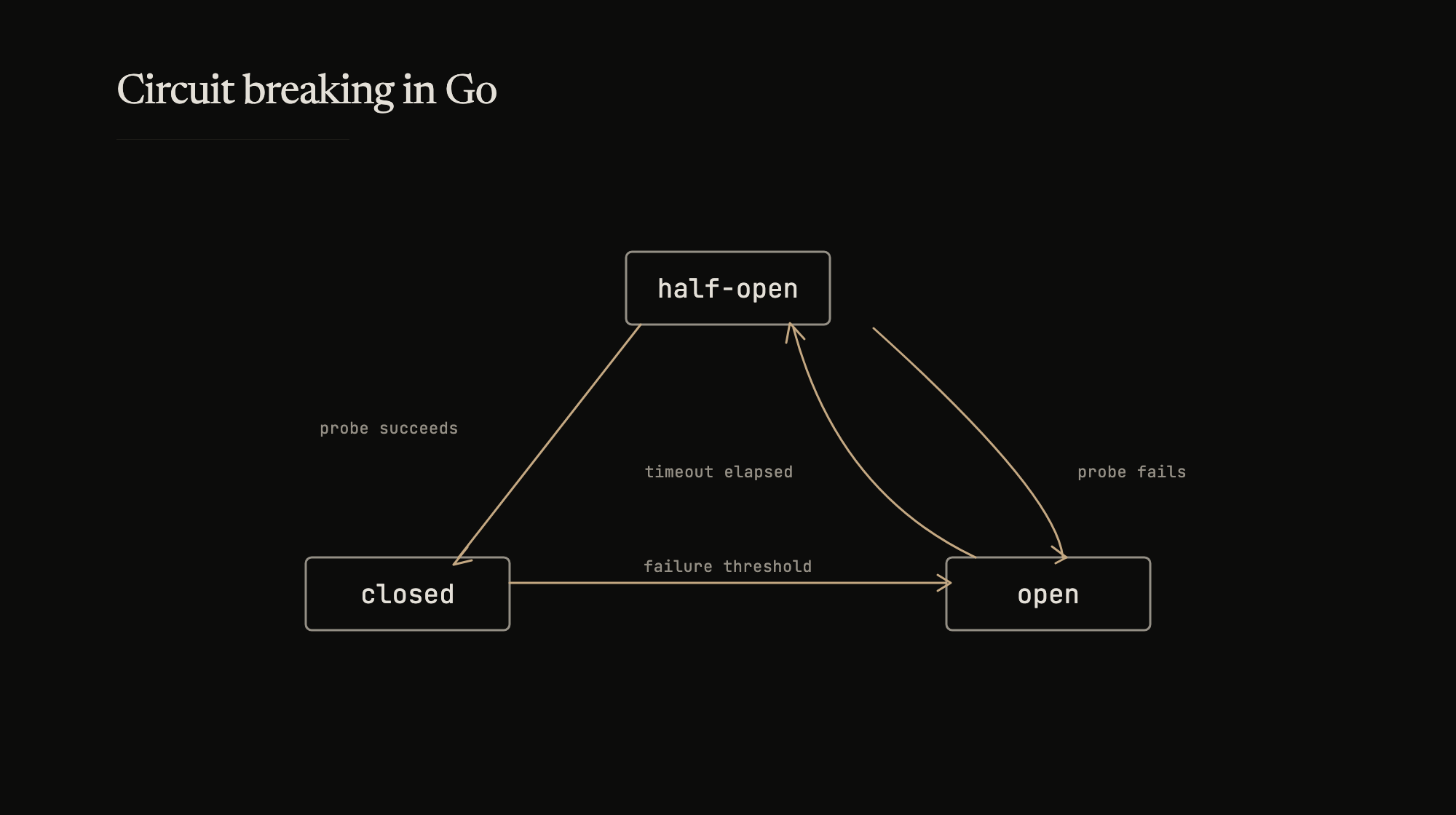

Three states

A circuit breaker wraps external calls and tracks their outcomes.

Closed – normal operation. Requests flow through. Failures are counted.

Open – failure threshold exceeded. Requests are rejected immediately without calling the dependency. A timer starts.

Half-open – timer expired. One request is allowed through as a probe. If it succeeds, the circuit closes. If it fails, the circuit reopens.

The transition logic is simple: count failures in closed state, trip at a threshold, wait a timeout, probe, decide.

sony/gobreaker

For most cases, reach for an existing library. sony/gobreaker is well-maintained and covers the common configuration surface:

settings := gobreaker.Settings{

Name: "UserService",

MaxRequests: 3,

Interval: 10 * time.Second,

Timeout: 30 * time.Second,

ReadyToTrip: func(counts gobreaker.Counts) bool {

return counts.ConsecutiveFailures > 2

},

OnStateChange: func(name string, from, to gobreaker.State) {

log.Printf("circuit %s: %s -> %s", name, from, to)

},

}

cb := gobreaker.NewCircuitBreaker(settings)

result, err := cb.Execute(func() (interface{}, error) {

return callUserService()

})

MaxRequests limits how many probes are allowed in half-open state. Interval is the window for counting failures in closed state – after it elapses, counts reset. Timeout is how long the circuit stays open before transitioning to half-open.

ReadyToTrip is where the policy lives. Consecutive failures is the simplest trigger. Failure ratio over a window is more nuanced. The function receives full counts (successes, failures, consecutive failures, total), so the logic can be as sophisticated as needed.

Custom implementation

When you need concurrency limiting, atomic state transitions without a mutex on the hot path, or behavior that gobreaker doesn’t expose, a custom implementation is straightforward:

type State int32

const (

StateClosed State = iota

StateHalfOpen

StateOpen

)

var (

ErrCircuitOpen = errors.New("circuit breaker is open")

ErrMaxConcurrency = errors.New("max concurrency exceeded")

)

type CircuitBreaker struct {

name string

threshold int32

timeout time.Duration

maxConcurrency int32

state int32

failureCount int32

lastFailure int64

currentReqs int32

mu sync.RWMutex

onStateChange func(from, to State)

}

State, failure count, last failure timestamp, and concurrent request count are all int32/int64 for atomic access. The mutex only guards state change callbacks, not the hot path.

func (cb *CircuitBreaker) Execute(ctx context.Context, fn func() error) error {

if !cb.canExecute() {

return ErrCircuitOpen

}

current := atomic.AddInt32(&cb.currentReqs, 1)

defer atomic.AddInt32(&cb.currentReqs, -1)

if cb.maxConcurrency > 0 && current > cb.maxConcurrency {

return ErrMaxConcurrency

}

err := fn()

cb.recordResult(err)

return err

}

func (cb *CircuitBreaker) canExecute() bool {

state := State(atomic.LoadInt32(&cb.state))

switch state {

case StateClosed:

return true

case StateOpen:

// Timeout elapsed -- try to claim the half-open probe slot.

// CAS ensures exactly one goroutine transitions.

now := time.Now().UnixNano()

last := atomic.LoadInt64(&cb.lastFailure)

if now-last < cb.timeout.Nanoseconds() {

return false

}

return atomic.CompareAndSwapInt32(&cb.state, int32(StateOpen), int32(StateHalfOpen))

case StateHalfOpen:

// Already probing -- reject until the probe completes.

return false

default:

return false

}

}

Recording results drives the state machine. A failure in closed state increments the counter and trips at threshold. A failure in half-open immediately reopens. A success in half-open resets the counter and closes the circuit.

func (cb *CircuitBreaker) recordResult(err error) {

if err != nil {

count := atomic.AddInt32(&cb.failureCount, 1)

atomic.StoreInt64(&cb.lastFailure, time.Now().UnixNano())

state := State(atomic.LoadInt32(&cb.state))

if (state == StateClosed && count >= cb.threshold) || state == StateHalfOpen {

cb.setState(StateOpen)

}

} else if State(atomic.LoadInt32(&cb.state)) == StateHalfOpen {

atomic.StoreInt32(&cb.failureCount, 0)

cb.setState(StateClosed)

}

}

func (cb *CircuitBreaker) setState(newState State) {

cb.mu.Lock()

defer cb.mu.Unlock()

old := State(atomic.LoadInt32(&cb.state))

if old == newState {

return

}

atomic.StoreInt32(&cb.state, int32(newState))

if newState == StateHalfOpen {

atomic.StoreInt32(&cb.failureCount, 0)

}

if cb.onStateChange != nil {

cb.onStateChange(old, newState)

}

}

The concurrency check on Execute doubles as a bulkhead – each service gets its own breaker, and each breaker caps in-flight requests. One saturated dependency can’t exhaust the caller’s goroutine pool.

Composing with retries

Circuit breakers and retries complement each other, but the interaction matters. Retries should not fire when the circuit is open – that defeats the purpose.

func CallWithRetry(ctx context.Context, cb *CircuitBreaker, fn func() error) error {

backoff := []time.Duration{100 * time.Millisecond, 500 * time.Millisecond, 1 * time.Second}

for i, delay := range backoff {

err := cb.Execute(ctx, fn)

if err == nil {

return nil

}

if errors.Is(err, ErrCircuitOpen) {

return err

}

if i < len(backoff)-1 {

select {

case <-ctx.Done():

return ctx.Err()

case <-time.After(delay):

}

}

}

return cb.Execute(ctx, fn)

}

The ErrCircuitOpen check breaks out immediately. No point sleeping and retrying when the breaker has already decided the dependency is down.

Fallback

When the circuit is open, the caller still needs to respond. Cache, default, or degraded response – whatever the product can tolerate:

func GetUserProfile(ctx context.Context, userID string) (*UserProfile, error) {

var profile *UserProfile

err := userServiceCB.Execute(ctx, func() error {

var err error

profile, err = getUserFromService(userID)

return err

})

if err != nil {

return getCachedProfile(userID), nil

}

return profile, nil

}

The fallback is a product decision, not a systems decision. What’s worse – showing stale data or showing an error? That determines the fallback strategy.

Tuning

Threshold, timeout, and concurrency limit are all deployment-specific. Low thresholds (3-5 failures) trip fast – good for user-facing paths where latency matters. Higher thresholds (10-20) tolerate intermittent errors – better for batch or internal services.

Timeout reflects recovery characteristics. A database might recover in 30 seconds. An overloaded third-party API might need minutes. Start conservative, adjust from monitoring data.

The most useful operational signal is the state change itself. Alert on circuit breaker transitions, not just downstream errors. An open circuit means the breaker is doing its job – but it also means something upstream needs attention.