I needed rate limiting. Not a sophisticated system – something that stops a single IP from hammering the app. The options were a Redis-backed solution or an npm module I hadn’t vetted. I wrote one instead.

var hits = {};

var timers = {};

function ratelimit(max, mins) {

max = max || 600;

mins = mins || 1;

var window = 60 * mins * 1000;

return function(req, res, next) {

var ip = req.headers['x-real-ip'] || req.socket.remoteAddress;

var key = 'rl:' + ip;

var n = hits[key] || 0;

res.setHeader('X-Ratelimit-Limit', max);

res.setHeader('X-Ratelimit-Remaining', max - n);

if (n >= max) {

var retry = Math.ceil(

(timers[key]._idleStart.getTime() + timers[key]._idleTimeout - Date.now()) / 1000

);

res.setHeader('X-Ratelimit-Message', 'Enhance Your Calm');

res.setHeader('X-Ratelimit-Retry-After', retry + 's');

res.statusCode = 420;

return res.end('Rate limit exceeded. Try again in ~' + retry + 's.');

}

hits[key] = n + 1;

if (!timers[key]) {

timers[key] = setTimeout(function() {

delete hits[key];

delete timers[key];

}, window);

}

next();

};

}

That’s it. Wire it into Express as middleware:

app.use(ratelimit(600, 1));

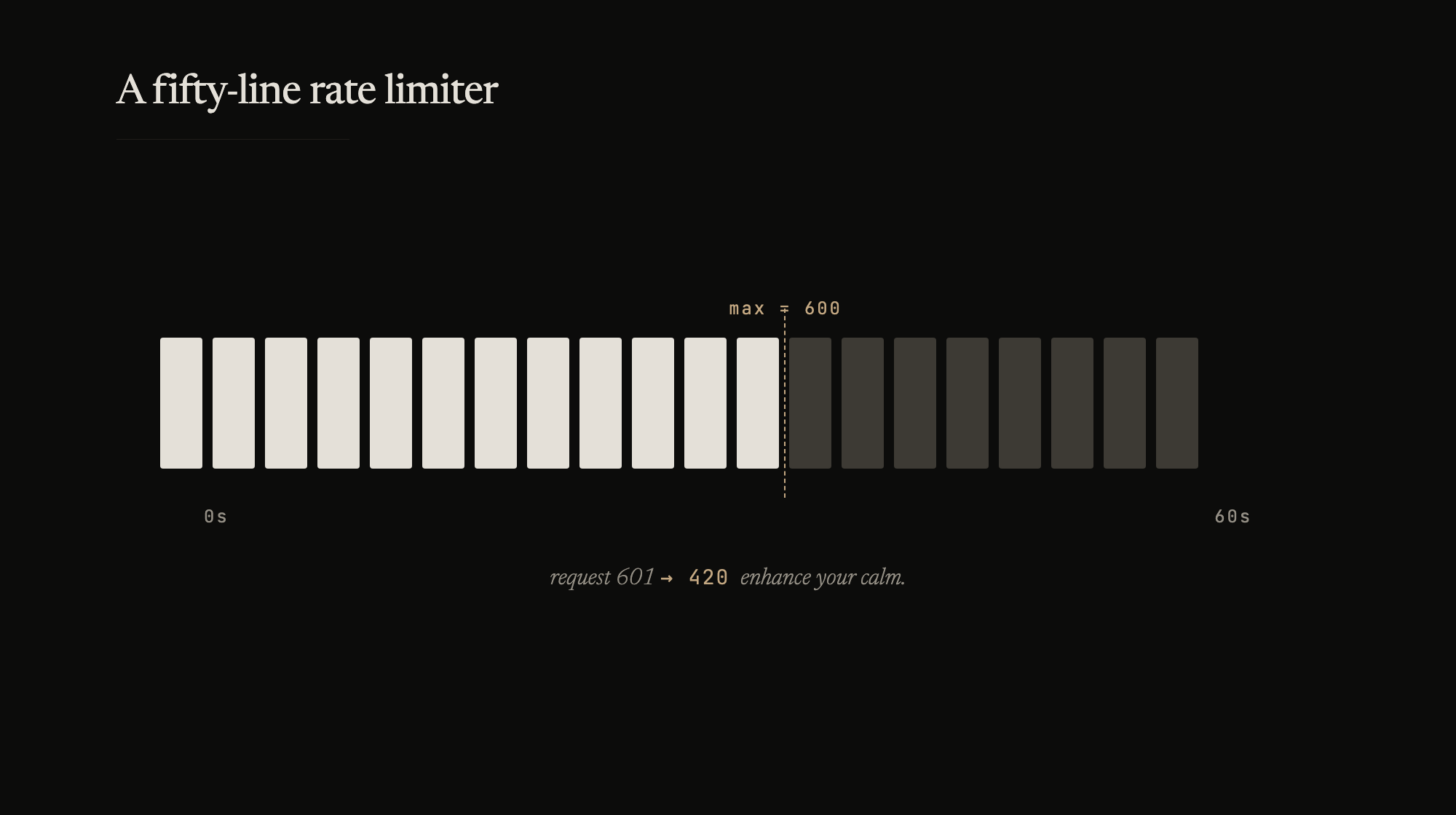

600 requests per IP per minute. After that, you get a 420.

How it works

Two plain objects – hits and timers. When an IP makes its first request, hits gets a key and timers sets a setTimeout that clears the key after the window expires. Every subsequent request from that IP increments the counter. When the counter hits max, the middleware short-circuits with a 420 response and a Retry-After header.

The x-real-ip check is for nginx – when the app sits behind a reverse proxy, req.socket.remoteAddress is the proxy, not the client. x-real-ip is what nginx sets.

HTTP 420 isn’t in the spec. Twitter used it for rate limiting and it stuck. “Enhance Your Calm” is their message too. It felt right.

What it doesn’t survive

A server restart clears all counters. A determined attacker rotating IPs walks right through it. It only works on a single process – if I’m running the app in a cluster, each worker has its own hits object and the limit is effectively multiplied by the number of workers.

None of that matters for what I need. One server, one process, modest traffic. The rate limiter exists to catch accidental abuse – a misbehaving scraper, a retry loop stuck in a bug, someone refreshing too fast. For that, fifty lines and two objects in memory is enough.